Migrating from Self-Managed Docker to AWS Elastic Container Service

I recently migrated our four containerized microservices from self managed Docker to AWS ECS. We are adding a lot more vineyards (the addition of California more than doubles the number of vineyards we currently have in our database, and a slew of other states aren't too far behind). I want to make sure the Cork Hounds web application is highly available within a single Region. However, I do not want to manage this myself by running a self-managed Docker Swarm or Kubernetes cluster because that takes too much time/attention on my part. Therefore, I decided to use the Elastic Container Service so that AWS can manage all the auto-scaling, failover complexity for me. Using ECS also enables me to replicate my task definitions to another Region in relative short order should I want to scale this to a multi-Region setup.

Overview

From ECS, you can use EC2 Instances or the new AWS Fargate service. Right now, it is not possible yet to make an Elastic File System (EFS) available to one or more containers deployed to Fargate. However, AWS did confirm this is in their backlog. Because our blog relies on a local Ghost container and our Amazon Alexa Skill relies on a Foursquare TwoFishes container, both of which require local disk storage for asset persistence, we have two containers that absolutely need persistent disk space. Therefore, I opted to use EC2 Instances with EFS, but can easily spin up our web container in Fargate to scale out if needed.

Shared Persistent Storage

If your containers need to read and write to content stored on disk, I recommend you look at using EFS as opposed to EBS. According to the EFS FAQ, "EFS provides a file system interface, file system access semantics (such as strong consistency and file locking), and concurrently-accessible storage for up to thousands of Amazon EC2 instances." Network attached storage is perfect for an ECS EC2 instance cluster.

EBS on the other hand is equivalent to an attached disk on a single instance. When using ECS with EC2 instances in an auto-scaling group, you probably don't want to manage your content (especially database synchronization) across EBS volumes that are coming and going as the cluster scales.

There is a great tutorial for setting up EFS with ECS and EC2 Instances. The tutorial is very straight forward, providing step by step instructions.

Creating a Cluster

The first thing you'll need to do is create a Cluster. You can choose from either (a) setting up Networking only (the VPC and Security Group), (b) configuring Linux Instances and Networking, or (c) configuring Windows Instances and Networking. The choice is yours; read up on creating a cluster. We prefer Linux because it uses less overhead, and Linux Containers are the norm; meaning you'll likely have less hassle. During this setup, you'll be faced with the following decisions:

- Instance Configuration - Determine the number and type of instances you want to use. In my opinion it is better to over estimate a bit; you can always create a new cluster and downsize your EC2 instance type. By default, the Amazon ECS Instance will have an 8 GiB root volume and a 22 GiB data volume for handling instances. This has proven good enough for us and we're pushing images all the time.

- Networking - Set your network configuration. Use an existing VPC and Security Group, or create new ones.

- Container instance IAM role - The IAM role you want to attach to the Instance. More info here.

Once complete, you can view your cluster resource utilization on the ECS dashboard.

Services, Task Definitions and Container Definitions

If you are not already familiar with ECS terminology/concepts, it would be good to read the AWS Getting Started documetation on ECS to get acquainted. Key concepts include Clusters, Instances, Services, Tasks, Task Definitions, Container Definitions, and the Container Agent.

For our implementation, I have created an ECS Cluster (collection of EC2 Instances), four primary ECS Services (specification for managing one or more instantiations of a Task Definition, load balancing, auto-scaling, etc), four primary Task Definitions (definition of container properties) and four Container Definitions. You may want to do something differently.

In our case, I wanted to keep all the container services->task definitions->container definitions separate so that I could fine tune their behavoir individually. Alternatively, there are some shared behavoirs amongst our containers that would allow me to manage two specific sets of parameters (rather than four) - user facing (blog and web container definitions), and support services (2 other container definitions). This means in the future I could have just 2 Container Services, each one pointing to a Task Definition, with each of those referencing two Container Definitions. You'll need to do some research to figure out the ECS archtiecture that will work best for you.

It is possible to define and run a task definition independently of a Service. If you are not running a user-facing website or data service, then this may be the best option for you. However, if like me you are deploying a website that you want running 24/7/365 on ECS, it is best to orchestrate your container(s) via Container Services.

Before we build our ECS Services, we should configure our Task Definitions.

Task Definitions

A Task Definition defines a set of parameters for hosting one or more Container Definitions. Any settings you specify here will apply to all containers associated with this Task. These configuration parameters are futher explained on the AWS developer guide.

- Name - To make things simple, I would recommend using a standard naming convention -e.g. web-task-definition, blog-task-definition, user-facing-task-definition, etc.

- Network Mode - The type of network mode that you want to use. We chose to stick with the default bridge networking type; however there are other choices.

- Task Size - For setting fixed sizes for memory and CPU. These fields are required for Fargate, but are optional for EC2. There are good descriptions regarding the allowed settings if you need to use these parameters.

- Task memory - Hard limit; the container is killed if this value is exceeded. Word of caution; you really need to understand the memory requirements of your containers to set this value. Otherwise you'll have a lot of excess container restarts. Excess memory can be shared amongst containers managed by this Task Definition. We left this blank.

- Task CPU - Hard limit; determines the amount of vCPU to allocate to your container(s). Excess compute can be shared amongst containers managed by this Task Definition.

- Task Placement - If you are using EC2, you can specify the availability zone, instance type, and other custom attributes that influence where the task is deployed.

- Container Definitions - A Task Definition references one or more Container Definitions, each containing specific instructions for a specific Container Instance.

- Volumes - This is where you can reference your EFS volume. Set a name and then the source path. If you followed the tutorial, AWS gave the example mount point of /efs. In this case, your source path would be /efs. Because we split our tasks up, one per container, I reference a subfolder here -e.g. /efs/blog.

A Task Definition is immutable once saved, and each revision results in a new version of the Task Definition. You'll notice the version at the end of the Definition name marked by a colon (:) and version number.

Container Definitions

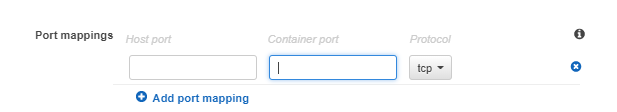

If you have used Docker before, you'll know that there are quite a few parameters that you can set for a container. I'll highlight the most relevant elements to running user-facing services like a web site and blog on ECS, and leveraging EFS. I'll also provide the settings we use where it makes a difference.

- Standard

- Container Name - I recommend using a standard naming convention -e.g. web-container-definition, blog-container-definition, etc.

- Image - The image URL to ECR (or another image repository in the future) -e.g. accountNumber.dkr.ecr.region.amazonaws.com/repository:tag

- Memory Limits - You must set at least one value, either a hard limit or a soft limit for the Container Definition. You can set both if you desire.

- Memory Reservation (Soft Limit) - The minimum memory you want to allocate to the container. We monitored our container stats (using 'docker stats' and container service monitoring in CloudWatch) to arrive at minimum number for each Container.

- Memory (Hard Limit) - Optional. The maximum memory you want to allocate to the container. This is a hard limit; the container is killed if this value is exceeded. Word of caution; you really need to understand the memory requirements of your containers to set this value. Otherwise you'll have a lot of excess container restarts.

- Environment

- Essential - All tasks must have at least one essential container.

- Storage and Logging

- Mount Point - This is where you select one of the Volumes you defined for the Task Definition. Simply choose the name of the mount point you want to use from the dropdown.

- Log Configuration - I prefer to use the AWS Logs Driver to send all logs to CloudWatch Logs. You'll need to create a log group, set your region and stream prefix.

We ignored all the other settings. Further, we chose to set minimum Memory Reservations rather than upper limits because I didn't want our containers killed off if they spiked in memory use.

Container Services

Next, you'll want to define a Container Service, which provides a set of parameters for running Task Definitions. Here are the settings you'll want to review.

- Launch Type - Choose either ECS or Fargate; we're using ECS obviously.

- Task Definition - Choose the Task Definition you want to use.

- Force a New Deployment - If you are updating an existing Container Service specification, you can force a redeploy of the containers associated with the Task.

- Number of Tasks - Specify the number of tasks you want to run. -e.g. If you put one (1) here, and your Task has one Container Definition, you'll have one Container. If your Task has two Container Definitions, you'll have two containers. Choosing two (2) will result in double the number of containers, and so on.

- Minimum Healthy Percent - The default is 50%, which means that ECS will not ensure that a healthy instance is always running. For instance, if a container is killed because it exceeds your Maximum memory allocation, it will not start a new container before draining the old one. Note, because Port values are fixed in the Container Definition, you need at least two instances running in order to start a new Container before killing an existing one. If you want a healthy instance to always be available, specify 100%.

- Maximum Healthy Percent - The defualt is 100%, which means that ECS will not start more than one Task at a time. If you want to always have a healthy instance, set this to 200%.

- Task Placement - We went with the default AZ Balanced Spread to place containers based on failover.

- Load Balancing - We are using the Application Load Balancer because its better suited for Microservice architectures because it separates the listening port and target port into separate configs, allowing you to do path or host based routing. ECS will manage your ALB, register your containers, etc.

- Health Check Grace Period - Default is zero (0). Depending on how long your container takes to start, you may want to set a value here (in seconds).

- Auto-Scaling - You can elect to auto-scale your Service by setting a maximum number of Tasks to run, and using either Target Tracking (using memory or CPU utilization to trigger scaling) or Step Tracking (using Alarms to trigger scaling).

Because we defined a Service, and told ECS we always want one (or more) Tasks running, should one stop, it will be restarted. If you want to replace a running container with a newer one manually, you can SSH to your EC2 instance and kill the container. If you want to automate this, there are some resources on the web, like this feature request on the AWS ECS Agent.

"Determine which of the container instances in your cluster can support your service's task definition (for example, they have the required CPU, memory, ports, and container instance attributes)." - AWS UpdateService

This is easier w/ Fargate because placement is managed for you. It would be nice if in a future update of ECS with EC2 and ALB, that AWS makes it possible to let ECS dynamically define/manage the Task Definition host machine port within the Container Definition. This would also require that they separate the Port from the Target Group to allow for multiple instances of a containerized microservice to run on different ports, but still support a single Target Group.

In my opinion, this would allow for more efficient utilization of EC2 Instances, which matters for low-budget operations like ours (at the expense of good fault tolerance). At present, during low traffic periods while running a single instance, if I want to replace one older container with a newer one, ECS cannot bring up the new one before it drains the old one because of port mapping constraints. This results in about 5 minutes of downtime.

Up and Running

Once you configure and launch the Container Service, it will start your Tasks, and you are up and running. Now the fun begins: debugging any issues. Once your application(s) are up and running, you can set CloudWatch Alarms with ALB Metrics or ECS Metrics to monitor your stack. Also, if you sent your logs to CloudWatch Logs, you can use Log Metrics to monitor for events, terms, or http status codes. As well, you could use Route 53 Health Checks to ensure your site is accessible to the world from one or more AWS Regions.

Blue|Green Deployments

If you want to do Blue|Green Deployments, where you are running multiple versions of your entire stack side by side, you can achieve this using Container Services. AWS has written a good how-to blog and released some code on github to achieve this configuration.

Observations

(Updated 6/21/2018) It has been nearly five months, and ECS has been great/reliable. I am using the same instance sizes (m4.large) before and after the move to ECS, with the same number of containers. While I have made some software upgrades, etc., I have noticed that the memory utilization has been consistently better/lower while using ECS ... but I am not sure why yet. Prior to moving, memory utilization was typically at 80%. Since the move, it hovers around 30%. I am happy about it, but will dig into this to see what changed.